[Image 1]

Introduction

Hey it's a me again @drifter1! Today we continue with the Parallel Programming series about the OpenMP API. The previous article was an introduction that I suggest everyone to read if they haven't already! Today we will get into details of how we define Parallel Regions. So, without further ado, let's dive straight into it!

GitHub Repository

Requirements - Prerequisites

- Basic understanding of the Programming Language C, or even C++

- Familiarity with Parallel Computing/Programming in general

- Compiler

- Linux users: GCC (GNU Compiler Collection) installed

- Windows users: MinGW32/64 - To avoid unnessecary problems I suggest using a Linux VM, Cygwin or even a WSL Environment on Windows 10

- MacOS users: Install GCC using brew or use the Clang compiler

- For more Compilers & Tools check out: https://www.openmp.org//resources/openmp-compilers-tools/

- Previous Articles of the Series

Quick Recap

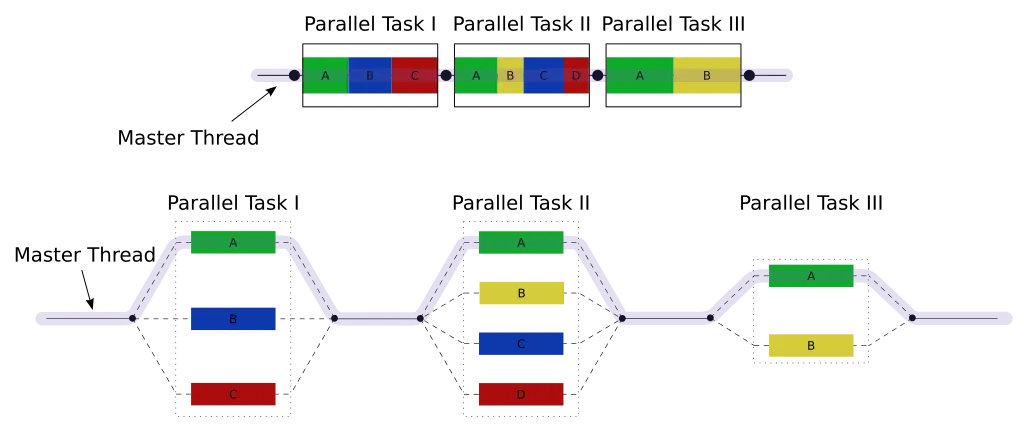

OpenMP is a parallel programming API thats meant for use in multi-threaded, shared-memory parallelism. Its built up of Compiler directives, Runtime library routines and Environmental variables. OpenMP offers a level of abstraction without taking away the full control of parallelization from the programmer. OpenMP Programs follow the fork-join model, where a master thread forks into a group of threads in parallel regions and joins back to one when the parallel region finishes.

The definition of a compiler directive in the C/C++ implementation of OpenMP is as follows:#pragma omp directive-name [clause ...]gcc -o output-name file-name.c -fopenmp

g++ -o output-name file-name.cpp -fopenmpParallel Region Construct

To FORK into a team of threads and start parallel execution of a block of code/statements on multiple threads, you have to use the parallel region construct or directive. After the parallel statements are finished the threads of course JOIN back into one.

Using the parallel region construct, definining a parallel block of code (or parallel region) in OpenMP is as simple as:

#pragma omp parallel

{

/* This code runs in parallel */

}By default, this construct creates a team of N threads, where N is determined at runtime by the maximum number of CPU threads that are available. For example, on a system with a 6-core CPU with 2 threads on each core, N would be 12. The threads that are created are numbered from 0 (master thread) to N-1.

At the end of the parallel region an implied barrier causes only the master thread to continue execution past that point. To get more in-depth, because the master thread of the team has a thread number of 0, checking if the thread can continue executing is as simple as checking if its number is 0 or not.

The construct definition that we showcased so far is of course only the tip of the iceberg(!). Using clauses after "parallel", its easy to define:

- Conditional parallelism - if clause

- Number of threads - num_threads clause

- Default data sharing behavior - default clause

- List of private variables - private clause

- List of shared variables - shared clause

Thread Management

Defining the number of threads

The number of threads to be executed in a parallel region can be defined using the num_threads clause:

#pragma omp parallel num_threads(N)int n;

omp_set_num_threads(n);Retrieving the number of threads

The number of threads thats executing in the current region can be retrieved using the runtime library routine omp_get_num_threads. When called in a sequential part of the program, the routine returns 1.

The routine takes no parameter and returns an integer:

int n;

n = omp_get_num_threads();Retrieving the thread number

The thread number of a calling thread can be returned using the routine omp_get_thread_num. When inside a sequential region, the function returns 0, else it returns the thread number within the current team:

int thread_num;

thread_num = omp_get_thread_num();Retrieving the maximum number of threads

To retrieve the upper bound of threads that can be used to form a team within a parallel region/construct, the routine omp_get_max_threads has to be used. This function tells us the number of threads that will be used when no num_threads clause and omp_set_num_threads routine is encountered:

int max_n;

max_n = omp_get_max_threads()Basic Parallel Region Clauses

Let's first explain the if, num_threads, default, private and shared clauses too, before getting into example programs.if clause

Using an if clause parallelism can be made conditional. This means that parallellism can be enabled/disabled based on the evaluation of an expression inside the if clause. If the expression inside the parenthesis of the if clause evaluates to true (1) then the parallel region will execute in parallel. On the other hand, when the expression evaluates to false (0) then the region will execute sequentially, using only one thread. For example. this can be quite useful for enabling/disabling parallelism using a global flag.

In C/C++ code:

#pragma omp parallel if(expression)

{

/*

Executes in parallel when expression evaluates to true (1)

Executes sequentially when expression evaluates to false (0)

*/

}num_threads clause

As we already mentioned earlier, the num_threads clause is used for directly specifying the number of threads to be executed.

In C/C++ code:

#pragma omp parallel num_threads(N)

{

/* A team of N threads executes the code block in parallel */

}default clause

Using the default clause we can specifiy the default data sharing behavior.

The data sharing behavior can be:

- private - all variables are private by default

- firstprivate - all variables are private by default, but initialized to the value of the shared variable

- lastprivate - all variables are private by default, but the value of the last iteration is assigned back to the shared variable (can't be used in this parallel construct though)

- shared - all variables are shared by default

- none - the programmer has to explicitly specify the data-sharing of all variables

#pragma omp parallel default(private | firstprivate | shared | none)private clause

Using the private clause we can specify the variables that should be private.

In C/C++ code:

#pragma omp parallel private( list of variables )shared clause

Using the shared clause we can specifiy the variables that should be shared.

In C/C++ code:

#pragma omp parallel shared( list of variables )Parallel Region Example Programs

Basic Parallel Region

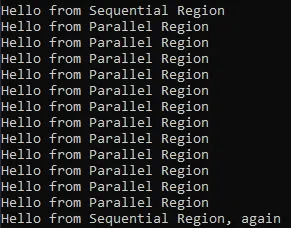

Let's print out a simple message in both sequential and parallel regions of code.

#include <omp.h>

#include <stdio.h>

int main(){

// Sequential Region

printf("Hello from Sequential Region\n");

// Parallel Region

#pragma omp parallel

{

printf("Hello from Parallel Region\n");

}

// Sequential Region

printf("Hello from Sequential Region, again\n");

return 0;

}Well in depends, of course! On my system with a 6-core CPU, and so 12 threads, a total of 12 messages were printed out in the parallel region:

Also note that the 2nd sequential section gets executed after the parallel region finishes/joins back into one!

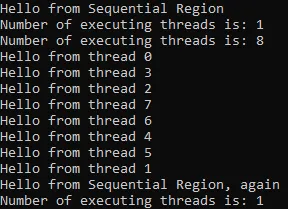

Thread Management

Let's extend the previous program to:

- define a specific number of threads to be executed using the num_threads clause

- make each thread in the parallel region print out its thread number

- print out the number of executing threads in the sequential and parallel regions of code (master thread only)

#include <omp.h>

#include <stdio.h>

int main(){

// Sequential Region

printf("Hello from Sequential Region\n");

printf("Number of executing threads is: %d\n", omp_get_num_threads());

// Parallel Region

#pragma omp parallel num_threads(8)

{

// Only master thread

if(omp_get_thread_num() == 0){

printf("Number of executing threads is: %d\n", omp_get_num_threads());

}

// All threads

printf("Hello from thread %d\n", omp_get_thread_num());

}

// Sequential Region

printf("Hello from Sequential Region, again\n");

printf("Number of executing threads is: %d\n", omp_get_num_threads());

return 0;

}

A total of 9 messages were now print out in the parallel region, and each thread printed out its number.

Conditional Parallelism

Let's again modify the previous code to run in parallel only when a specific flag is set to true. For that we have to use the if clause.

The modified code is:

#include <omp.h>

#include <stdio.h>

#define PARALLELISM_ENABLED 0

int main(){

...

// Parallel Region

#pragma omp parallel if(PARALLELISM_ENABLED) num_threads(8)

...

}

Data Sharing

Let's lastly also get into data sharing:

- defining a variable thread_num for storing the thread number of each thread

- specifying the variable as private using the private clause

#include <omp.h>

#include <stdio.h>

#define PARALLELISM_ENABLED 1

int main(){

int thread_num;

...

// Parallel Region

#pragma omp parallel if(PARALLELISM_ENABLED) private(thread_num) num_threads(8)

{

// Retrieve thread number

thread_num = omp_get_thread_num();

// Only master thread

if(thread_num == 0){

printf("Number of executing threads is: %d\n", omp_get_num_threads());

}

// All threads

printf("Hello from thread %d\n", thread_num);

}

...

}

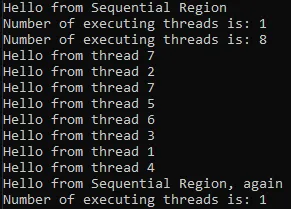

If we haven't defined the variable as private it would be shared by default making the output uncertain:

Without any synchronization, thread 0 couldn't keep up with thread 7, making it's output 7 instead of 0. In loops things become even more dangerous! Thus, always make sure to take care of shared variables properly!

RESOURCES:

References

- https://www.openmp.org/resources/refguides/

- https://computing.llnl.gov/tutorials/openMP/

- https://bisqwit.iki.fi/story/howto/openmp/

- https://nanxiao.gitbooks.io/openmp-little-book/content/

Images

Previous articles about the OpenMP API

- OpenMP API Introduction → OpenMP API, Abstraction Benefits, Fork-Join Model, General Directive Format, Compilation

Final words | Next up

And this is actually it for today's post!Next time we will get into Parallel Loops...

See ya!

Keep on drifting!